ChatGPT: An AI that can write Minetest mods... kinda

This article was written at a time when LLM AIs were brand new. As such, this post is very outdated and does not represent my current opinions.

OpenAI's [GPT-3](https://en.wikipedia.org/wiki/GPT-3) is a powerful new Artificial Intelligence model that uses deep learning to produce human-like text. It was trained on a large body of text, with sources including websites, Wikipedia, and books. It doesn't just understand natural language, it can also work with programming languages. This topic is especially relevant with the recent introduction of [GitHub Copilot](https://github.com/features/copilot). Copilot is an "AI pair programmer" for your IDE that suggests code and entire new functions. It's based on same technology as GPT-3, but with a model derived from GPT-3 and optimised for code called Codex. This article will use GPT-3 and Codex, as I wasn't able to get GitHub Copilot, but the results will be identical. In this article, I will ask GPT-3 to write Minetest code and explore how much it knows about modding, creating simple and advanced Minetest mods. I will finish by using it to convert Minecraft mods to Minetest.This article was written at a time when LLM AIs were brand new. As such, this post is very outdated and does not represent my current opinions.

OpenAI’s GPT-3 is a powerful new Artificial Intelligence model that uses deep learning to produce human-like text. It was trained on a large body of text, with sources including websites, Wikipedia, and books. It doesn’t just understand natural language, it can also work with programming languages.

This topic is especially relevant with the recent introduction of GitHub Copilot. Copilot is an “AI pair programmer” for your IDE that suggests code and entire new functions. It’s based on same technology as GPT-3, but with a model derived from GPT-3 and optimised for code called Codex. This article will use GPT-3 and Codex, as I wasn’t able to get GitHub Copilot, but the results will be identical.

In this article, I will ask GPT-3 to write Minetest code and explore how much it knows about modding, creating simple and advanced Minetest mods. I will finish by using it to convert Minecraft mods to Minetest.

So, what is Minetest? Let’s ask GPT-3 to explain:

Explain what Minetest is and what its benefits are

Minetest is a free and open-source voxel game engine with easy modding and game creation capabilities. It has a strong emphasis on community-created content and user-friendliness. Some of Minetest’s benefits include being able to run on a wide variety of operating systems and hardware, having a fully customizable game engine, and being able to create and share mods and other content easily.

And how is GPT-3 able to write Minetest code?

Explain how GPT-3 knows and is able to write Minetest code

GPT-3 has been trained on a large number of code examples, including code for Minetest. As a result, it is able to identify patterns in the code and generate new code that is similar to the examples it has seen.

GPT-3 has a random element, and so can generate a different answer each time. This is expressed as temperature - the higher the temperature, the more randomness and the more creative the answers will be. But higher temperatures are also less accurate.

A common bias when people assess the output of GPT-3 is to select the best answers. I’ll be using the first answer in most cases, and will try to keep temperature low.

Generating simple Minetest mods #

Registering a node #

A node in Minetest is a position in the 3d grid, it’s known as a Block in other games. For example, a node could be air, grass, dirt, or something else.

Most Minetest modding tutorials start with registering a type of node, which means that there will be a lot of documentation on the Internet about doing this. It seems likely that some of this information found its way into GPT-3’s training data.

Write Lua code that adds a node in Minetest

Write Lua code that adds a node in Minetest's world

minetest.add_node({x=1, y=2, z=3}, {name="default:dirt"})Huh. It modified the prompt to be about adding a node to Minetest’s world, rather than registering a node. I’m using GPT-3 in autocomplete mode here, where it attempts to finish the prompt you give it. In this situation, it decided that changing the request was the more likely completion. One way to fix this is by adding a full stop.

Running the prompt again resulted in a valid node registration, so it can work out the meaning, but not very reliably. It doesn’t help that “add” is a bit ambiguous. I changed “adds” to “registers” to be a bit more specific:

Write Lua code that registers a node in Minetest

minetest.register_node("my_node", {

description = "My Node",

tiles = {"my_node.png"},

groups = {cracky=3}

})OK, cool it can do that. The node name doesn’t follow conventions, but that’s forgivable.

A node that quacks #

But how much does it actually understand it? This code is very simple, and it would be easy for it to just echo it from tutorials. Let’s change the spec a little bit, to make it more unique:

Write Lua code that registers a node in Minetest that says quack when you punch it

minetest.register_node("quack", {

description = "Quack",

tiles = {"default_wood.png"},

on_punch = function(pos, node, player, pointed_thing)

minetest.chat_send_player(player:get_player_name(), "Quack!")

end,

})

That’s cool. It’s able to understand that “say” means a chat message, and has also inferred a node name and description based on the prompt. The node name still doesn’t match the naming conventions, but the description is correctly in title case. GPT-3 is designed for finding patterns, so this isn’t too surprising.

Duck-Goose node #

This is still quite a simple example, let’s see how it does with a more complicated problem:

Write Lua code that registers a node in Minetest that says duck when you punch it once, and goose when you punch it twice

function duck_goose(self, clicker)

if not clicker or not clicker:is_player() then

return

end

if self.object:get_luaentity().ducks == 1 then

self.object:set_properties({

textures = {"goose.png"},

})

self.object:get_luaentity().ducks = 2

elseif self.object:get_luaentity().ducks == 2 then

self.object:set_properties({

textures = {"duck.png"},

})

self.object:get_luaentity().ducks = 1

end

end

minetest.register_node("example:duck", {

description = "Duck/Goose",

tiles = {"duck.png"},

groups = {choppy=2,dig_immediate=2},

on_punch = duck_goose,

on_rightclick = duck_goose,

ducks = 1,

})OK, this is interesting: it’s cargo-culting on steroids. Cargo-culting is when a programmer copies and combines code without understanding it. It’s mixed up the entity and node APIs big time, and has also gotten “duck” and “goose” the wrong way around. It’s also changing the texture rather than saying something. Theoretically, the code almost makes sense though.

A big part of the problem is how the prompt is phrased. Making the prompt a lot less ambiguous makes a big difference, unsurprisingly. I also switched to Edit mode, which I found worked a bit better.

Write this into Lua code for Minetest

A node that alternatives between saying duck and goose when punched

minetest.register_node("duck_goose:duck_goose", {

description = "Duck Goose",

tiles = {"duck_goose.png"},

groups = {cracky=3},

on_punch = function(pos, node, puncher)

local meta = minetest.get_meta(pos)

local say = meta:get_string("say")

if say == "duck" then

minetest.chat_send_all("Goose!")

meta:set_string("say", "goose")

else

minetest.chat_send_all("Duck!")

meta:set_string("say", "duck")

end

end,

})This works, although isn’t entirely optimal. It would be better to use an integer rather than a string to keep track.

And yes, I did make a typo in the prompt.

Explaining code #

Explaining the Quack node #

It would be nice to find out just how well GPT-3 can understand the code. GPT-3 is very good at noticing patterns and regurgitating, but is it able to explain what the code is doing?

Using edit mode, I asked it to add comments to the first Quack node:

Add comments to the code

<Quack node code>

-- create a duck block

minetest.register_node("quack", {

-- set the description to 'Quack'

description = "Quack",

-- use the default wood texture

tiles = {"default_wood.png"},

-- when the player punches the block, the chat will send them the string 'Quack!'

on_punch = function(pos, node, player, pointed_thing)

minetest.chat_send_player(player:get_player_name(), "Quack!")

end,

})The results of this prompt varied quite a lot, from detailed comments to a single comment for the code. The above was the result of the first run, but in other runs GPT-3 was wrong (saying the node made a duck sound) or just unhelpful (writing “this is a comment”).

Next, I thought I’d try getting it to explain the code as prose:

Explain what this code does

<Quack node code>

This code registers a new node type, “quack”, which has the default wood texture and sends the player the message “Quack!” when punched

Full marks there, I guess.

Explaining actual mod code #

The Quack node is a fairly simple example, exactly the sort of thing you’d find in a Minetest tutorial. How well does GPT-3 do on actual mod code?

I asked GPT-3 to explain the code for health bars in Conquer, my RTS mod for Minetest.

Explain what this code does to a beginner

<Conquer code>

This code creates an entity called a “healthbar” which is used to show a player’s health in the game “conquer”. The entity is a sprite, which is an image that is displayed in the game, and it is immortal, meaning it can not be killed. The healthbar is updated based on the health of the player it is attached to. If the player’s health changes, the healthbar will change to reflect that. If the player dies, the healthbar will be removed.

This is almost entirely correct, the only inaccuracy is that it’s attached to a unit entity and not a player. There’s absolutely no way it could have known this though, given the code snippet doesn’t mention units at all.

Working with GUIs (Formspecs) #

Generating a simple formspec #

Lua is a fairly common programming language, used by many more games and programs than Minetest. But what about formspecs, a domain-specific language for defining GUIs in Minetest?

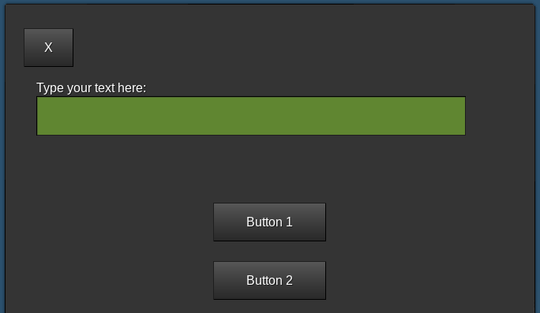

Write a Minetest formspec with a field and two buttons

form = "size[8,8]" ..

-- titlebar

"button_exit[0,0;1,1;exit;X]" ..

-- text input field

"field[0.5,1.5;7,1;text;Type your text here:;]" ..

-- buttons

"button[3,3;2,1;button1;Button 1]" ..

"button[3,4;2,1;button2;Button 2]"

It took some creative liberty by adding a close button to the top, but it has fulfilled the requirements.

Manipulating formspecs #

What about formspec code that doesn’t have surrounding text? I provided it with the following code snippet:

size[8,3]

label[0.5,0.1;Hello everyone!]

field[0.5,1;3.5,1;name;Name;]I started by wondering if it could detect the language:

Write the name of the language in a comment

<formspec code>

// Minetest Formspec

...That’s cool! Weird that it choose //, given that formspecs are written inside

Lua which uses --. I guess // is more common.

What about manipulating the formspec?

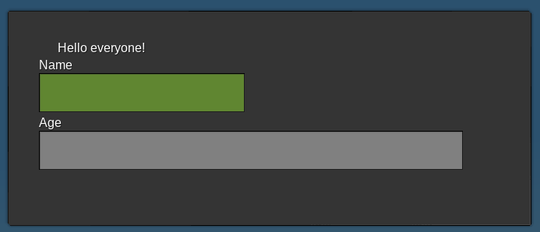

Add another text box for age twice the width of the name field

<formspec code>

size[8,3]

label[0.5,0.1;Hello everyone!]

field[0.5,1;3.5,1;name;Name;]

field[0.5,2;7,1;age;Age;]

That’s correct, it’s also placed it without overlap. I used “text box” in the prompt to avoid biasing it by saying “field”.

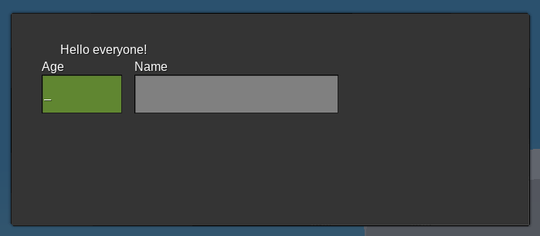

Does it understand directions?

Add another field for age to the left of the name field

<formspec code>

size[8,3]

label[0.5,0.25;Hello everyone!]

field[0.5,1;1.5,1;age;Age;]

field[2,1;3.5,1;name;Name;]

It even moved the name field out of the way, rather than going into the negatives.

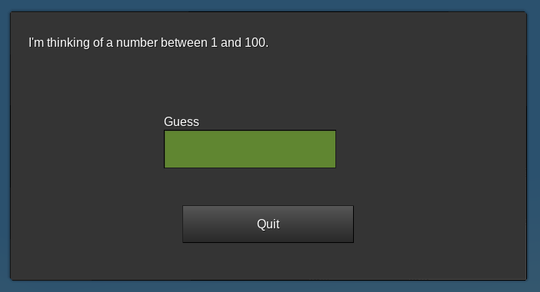

Guessing game #

Next, I wondered if I could get it to generate a guessing game formspec, with a formspec submission callback.

Write into Lua as a Minetest mod

Create a guessing game formspec

minetest.register_on_player_receive_fields(function(player, formname, fields)

if formname ~= "guess" then

return

end

if fields.quit then

return

end

local guess = tonumber(fields.guess)

if guess == nil then

minetest.chat_send_player(player:get_player_name(), "Please enter a number.")

return

end

if guess < 1 or guess > 100 then

minetest.chat_send_player(player:get_player_name(), "Please enter a number between 1 and 100.")

return

end

if guess == 42 then

minetest.chat_send_player(player:get_player_name(), "You win!")

else

minetest.chat_send_player(player:get_player_name(), "Sorry, try again.")

end

end)

minetest.register_chatcommand("guess", {

params = "",

description = "Play a guessing game",

func = function(name, param)

local player = minetest.get_player_by_name(name)

if player == nil then

-- just a check to prevent the server crashing

return false, "Player not found"

end

minetest.show_formspec(name, "guess",

"size[8,4]" ..

"label[0,0;I'm thinking of a number between 1 and 100.]" ..

"field[2.5,2;3,1;guess;Guess;]" ..

"button_exit[2.5,3;3,1;quit;Quit]")

end,

})

OK, now it’s scaring me.

It’s missing a way to submit the guess - there’s only a quit button. It works if you add this, though.

I found the “just a check to prevent the server crashing” comment quite interesting. I wondered whether this was taken straight out of Minetest mods. A quick search on GitHub revealed that this exact comment is used quite frequently by mods in chat commands.

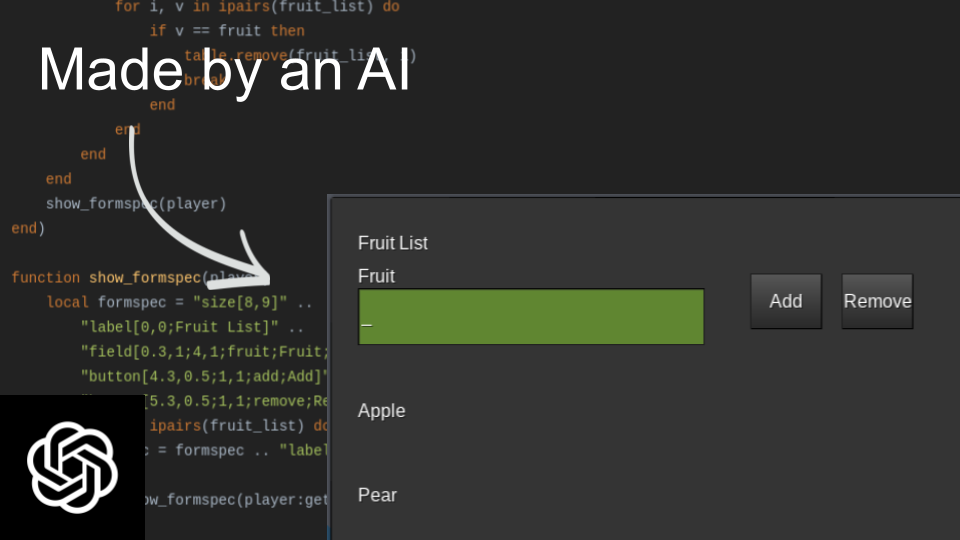

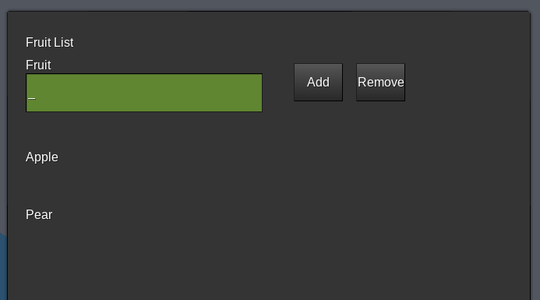

List of fruit editor #

Guessing games are fairly common, what about something more complicated?

Write into Lua as a Minetest mod

Create a formspec that shows and allows maintaining a list of fruit

minetest.register_on_player_receive_fields(function(player, formname, fields)

if formname ~= "fruit_list" then

return

end

if fields.add then

local fruit = fields.fruit

if fruit ~= "" then

table.insert(fruit_list, fruit)

end

elseif fields.remove then

local fruit = fields.fruit

if fruit ~= "" then

for i, v in ipairs(fruit_list) do

if v == fruit then

table.remove(fruit_list, i)

break

end

end

end

end

show_formspec(player)

end)

function show_formspec(player)

local formspec = "size[8,9]" ..

"label[0,0;Fruit List]" ..

"field[0.3,1;4,1;fruit;Fruit;]" ..

"button[4.3,0.5;1,1;add;Add]" ..

"button[5.3,0.5;1,1;remove;Remove]"

for i, v in ipairs(fruit_list) do

formspec = formspec .. "label[0," .. (i + 1) .. ";" .. v .. "]"

end

minetest.show_formspec(player:get_player_name(), "fruit_list", formspec)

end

minetest.register_chatcommand("fruit", {

params = "",

description = "Show fruit list",

func = function(name, param)

local player = minetest.get_player_by_name(name)

if player == nil then

return false, "Player not found"

end

show_formspec(player)

return true, "Fruit list shown"

end,

})

Wow. It’s just missing a definition of fruit_list, and should be using

minetest.formspec_escape to handle the case where a fruit name has special

characters. I think it’s interesting how it went with labels instead of a text

list.

Minecraft to Minetest conversion #

One thing that could indicate deep understanding would be the ability to convert between different modding APIs. I found the following hello world example in a Minecraft Forge modding tutorial:

package minecraftfun;

import net.minecraft.util.text.TextComponentString;

import net.minecraftforge.event.world.BlockEvent.PlaceEvent;

import net.minecraftforge.fml.common.eventhandler.SubscribeEvent;

public class BlockPlaceHandler {

@SubscribeEvent

public void onPlaceEvent(PlaceEvent event) {

event.getPlayer().sendMessage(

new TextComponentString("You placed a block : " + event.getPlacedBlock().getBlock().getLocalizedName()));

}

}The first prompt I tried ended up just changing the package name in the first

line to minetest rather than rewriting it into Lua.

Rewrite the following code into Minetest

<Java code>

package minetestfun;

...The second time, I explicitly mentioned Lua:

Write into Lua as a Minetest mod

<Java code>

--[[

This is a Minetest mod that writes into a file when a block is placed.

It is written in Lua.

]]

import net.minecraft.util.text.TextComponentString;

import net.minecraftforge.event.world.BlockEvent.PlaceEvent;

import net.minecraftforge.fml.common.eventhandler.SubscribeEvent;

public class BlockPlaceHandler {

@SubscribeEvent

public void onPlaceEvent(PlaceEvent event) {

event.getPlayer().sendMessage(

new TextComponentString("You placed a block : " + event.getPlacedBlock().getBlock().getLocalizedName()));

}

}No, adding a comment saying “It is written in Lua” doesn’t make it written in Lua. The comment about writing to a file is also completely incorrect.

Next, I went back to saying “Rewrite”:

<Java code>

minetest.register_on_placenode(function(pos, newnode, placer, oldnode, itemstack, pointed_thing)

if placer:is_player() then

minetest.chat_send_player(placer:get_player_name(), "You placed a block : "..newnode.name)

end

end)Woah! That’s exactly what I wanted. It even checks that placer is a player,

which is something that many modders forget to do.

The only thing it does do wrong is newnode.name. It should get the node’s

description from the node definition, this can either be done using

ItemStack(newnode.name):get_description() or by looking up the node def from

minetest.registered_nodes. This mistake is understandable though given that

the Java code uses “name” for what Minetest calls “description”.

As mentioned, GPT-3 includes randomness; it doesn’t always produce the same

result. I ran it again, with a lower temperature, and it made up an API function

called send_message:

<Java code>

minetest.register_on_placenode(function(pos, newnode, placer, oldnode, itemstack, pointed_thing)

placer:send_message("You placed a block: "..newnode.name)

end)Conclusion #

GPT-3/Codex is surprisingly intelligent and can produce working Minetest mods and formspecs. However, it frequently produces results that look like cargo-culting and making stuff up, and it can also produce vulnerable code. It also requires effort from a human to write good prompts and identify problems.

GitHub’s Copilot uses another AI model from OpenAI called Codex. It’s very closely related to GPT-3 but specialises in code rather than natural language. Copilot uses this model in IDEs, such as VSCode, to suggest code.

I’ll probably look into GitHub Copilot in the future, but I imagine it’ll produce very similar results just with better IDE integration.

GPT-3/Codex learned how to write Minetest code by reading code on the Internet. This code may or may not be open source, and may or may not be permissively licensed. These models tend to regurgitate code, which leads to license laundering - open source code being turned into proprietary code, without credit. These products benefit from the unpaid labour of the open-source community. So whilst this technology is interesting, I’m not sure how much I agree with it ethically.

You can try out GPT-3 and Codex for free on OpenAI’s website, and GitHub Copilot is now available publicly.

TL;DR #

- It can state some facts about Minetest

- It can create small mods based on human language descriptions:

- It can explain what Minetest code does in comments or prose

- It can understand Minetest’s domain-specific language for GUIs (formspecs) and can manipulate them

- It can convert Minecraft Java code into Minetest Lua code

Comments

Very good article. I look forward to seeing more and better examples of Minetest/Minecraft and AI.

This is good stuff. But I think GPT 5 has sure improved a lot

Definitely has. At the same time, my attitude towards it has gone from “oh this is cool and novel, hope no one uses it in production” to “oh my god please stop forcing AI everywhere and using it in production”